- Home

- About Us

- Work

- Journal

- Contact

- Create bootable usb from iso ubuntu command line

- Contoh soal momentum impuls dan tumbukan

- Maroon 5 girls like you list of girls

- Linear regression equation explained math is fun

- Moyes and schulte principles of animal physiology pdf

- Best spotify music converter for mac

- Medcurso pre-o

- Photoshop cs6 full crack sinhvienit

- Avira free antivirus download 2016

- Profecias de enrique castillo rincon pdf

- Where is my library folder on mac

- Andy from black veil brides

- Sony a7 vs canon 7d review

- Mac word processor support docx

- Xbox 360 skyrim how to get married

- Seagate 2tb internal hard drive not detected

- Voipdiscount call charges

- How to change text color using dictation on mac

- Caroline pennell as long as you love me lyrics

- Wow hits 2016 christian songs

- Microscor skype for business startup

- Vlc media player convert mkv to mp4

- Apple store microsoft office 2016

- Backup camera for bmw

- Yu gi oh season 1 episode 8 english dub

- Callaway x hot driver 13-5

- Home

- About Us

- Work

- Journal

- Contact

- Create bootable usb from iso ubuntu command line

- Contoh soal momentum impuls dan tumbukan

- Maroon 5 girls like you list of girls

- Linear regression equation explained math is fun

- Moyes and schulte principles of animal physiology pdf

- Best spotify music converter for mac

- Medcurso pre-o

- Photoshop cs6 full crack sinhvienit

- Avira free antivirus download 2016

- Profecias de enrique castillo rincon pdf

- Where is my library folder on mac

- Andy from black veil brides

- Sony a7 vs canon 7d review

- Mac word processor support docx

- Xbox 360 skyrim how to get married

- Seagate 2tb internal hard drive not detected

- Voipdiscount call charges

- How to change text color using dictation on mac

- Caroline pennell as long as you love me lyrics

- Wow hits 2016 christian songs

- Microscor skype for business startup

- Vlc media player convert mkv to mp4

- Apple store microsoft office 2016

- Backup camera for bmw

- Yu gi oh season 1 episode 8 english dub

- Callaway x hot driver 13-5

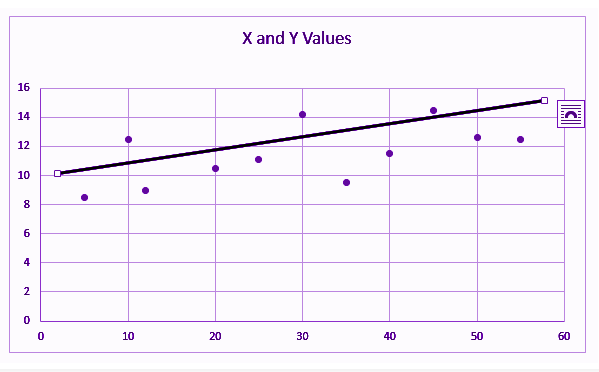

In other words, it tells you how far away the points tend to be from the prediction line. The standard error of the estimate is a measure of the accuracy of predictions made with a regression line and has to do with how wide the data points are scattered (strength of the correlation). Regression equations make a prediction, and the precision of the estimate is measured by the standard error of the estimate. Although you may be asked to report and, the purpose of regression is to be able to find values for the slope and the y-intercept that creates a line that best fits through the data. This is because they are both the linear equation. If you remember from algebra class, this formula is like. The equation used for regression is or some variation of that. The best-fitting line is calculated through the minimization of total squared error between the data points and the line. The one difference is that the purpose of regression is prediction. In a lot of ways, it’s similar to a correlation since things like and are still used. See complete derivation.Linear regression is a method for determining the best-fitting line through a set of data. Multiple Regression Least-Squares: Multiple regression estimates the outcomes which may be affected by more than one control parameter or there may be more than one control parameter being changed at the same time, e.g. The Least-Squares m th Degree Polynomials: The least-squares m th degree Polynomials method uses m th degree polynomials to approximate the given set of data,,. The Least-Squares Parabola: The least-squares parabola method uses a second degree curve to approximate the given set of data,,. The Least-Squares Line: The least-squares line method uses a straight line to approximate the given set of data,,. To obtain further information on a particular curve fitting, please click on the link at the end of each item. The applications of the method of least squares curve fitting using polynomials are briefly discussed as follows.

Polynomials are one of the most commonly used types of curves in regression. According to the method of least squares, the best fitting curve has the property that: The fitting curve has the deviation (error) from each data point, i.e.,,. , where is the independent variable and is the dependent variable. The method of least squares assumes that the best-fit curve of a given type is the curve that has the minimal sum of the deviations squared ( least square error) from a given set of data. This best-fitting curve can be obtained by the method of least squares. Thus, a curve with a minimal deviation from all data points is desired. Nevertheless, for a given set of data, the fitting curves of a given type are generally NOT unique. The curve fitting process fits equations of approximating curves to the raw field data. A process of quantitatively estimating the trend of the outcomes, also known as regression or curve fitting, therefore becomes necessary.

Even though all control parameters (independent variables) remain constant, the resultant outcomes (dependent variables) vary. Field data is often accompanied by noise.